Exploratory Series: Archaeology and HPC

Running from boulders, jumping across chasms,  defeating villains while simultaneously finding ancient and mysterious artifacts—this is archaeology. Except, that’s not quite true. These are common misconceptions people tend to have about the field, and they can drive any hard-working archaeologist insane. In much the same vein, people, including archaeologists, often have misconceptions about high-performance computing—big computers doing complex calculations that are only useful in fields like astrophysics, computer science, or mechanical engineering. But high-performance computing (HPC) is not just for these traditional fields. In fact, HPC has already proven to be a potent tool for archaeologists, and will only continue to be used more and more in a variety of ways.

defeating villains while simultaneously finding ancient and mysterious artifacts—this is archaeology. Except, that’s not quite true. These are common misconceptions people tend to have about the field, and they can drive any hard-working archaeologist insane. In much the same vein, people, including archaeologists, often have misconceptions about high-performance computing—big computers doing complex calculations that are only useful in fields like astrophysics, computer science, or mechanical engineering. But high-performance computing (HPC) is not just for these traditional fields. In fact, HPC has already proven to be a potent tool for archaeologists, and will only continue to be used more and more in a variety of ways.

We live in a world of Big Data. Our human drive for discovery—to capture and record, to analyze and make sense of the world—has led us to collect massive amounts of data, and archaeologists are no exception. From satellite imaging and LiDAR scanning to 3D reconstruction and agent-based modeling, archaeologists capture and produce data in droves. And without HPC, all this data would go to waste.

High-performance computing refers to the use of powerful computers and advanced software tools to perform complex calculations and simulations that would be difficult or impossible using conventional computing resources. HPC can offer significant benefits for a wide range of applications. Some general benefits of HPC that archaeologists can exploit include:

-Improved speed and efficiency: HPC allows users to perform calculations and simulations much faster than on a regular desktop computer or laptop. This lets users complete tasks quickly, save time and money, and make more informed decisions.

-Ability to handle large datasets: HPC can process and analyze large amounts of data more efficiently than conventional computers, which is becoming increasingly necessary within archaeology.

-Flexibility and scalability: When running on HPC, users can scale to much larger problem sizes and configurations. HPC resources now also include purpose-built accelerator technologies such as GPUs for modern compute-intensive workloads, allowing archaeologists to get the most out of their data.

Archaeology is a massive field of study, and the types of research and work undertaken by archaeologists vary tremendously. The ways in which HPC can help those in the field also vary widely, but this article will focus on three main areas: Computational modeling, 3D Reconstruction and digital preservation, and Remote Sensing. Many of these areas have technologies and methods that overlap, and some may even be combined with others to try and obtain a more complete picture of the history of a geographical area, but all benefit greatly from, or even rely entirely upon, the use of HPC.

Computational Modeling for Archaeology

Computational modeling has a wide range of uses in the field of archaeology. At its core, computational modeling is using computers to build models or run simulations. For archaeologists, this is typically related to a specific geographical area within a certain time period. The main ways archaeologists can use computational modeling are for building and analyzing digital elevation models—which we will call Terrain Analysis—and for Agent-Based Modeling. Depending on the research goal, the two can often overlap, with information gathered from terrain analysis being used when developing agent-based models. But, regardless of the research specifics, computational modeling benefits significantly from using HPC resources.

Terrain analysis involves using HPC to create models of and extract meaningful information from topographical features. These models can show how a variety of things (people, water, ideas, goods, social connections, etc.) flow across landscapes. Some things terrain analysis can be used to study include:

-Watersheds—hydrological flow modeling

-Peoplesheds—least-cost analysis (effort of moving across a landscape)

-Viewsheds—highlighting prominent features and what can be seen from where

Terrain analysis relies heavily upon geospatial data—data that includes information related to locations on or near the Earth’s surface. Without this data, accurate landscape models would be impossible to construct; e.g., the mountain is X-amount of meters tall, Y-amount in diameter, and located at Z-location. As one can imagine, the volume of data for any specific area on Earth is immense. Entire systems, called Geographic Information Systems (GIS), are used to manage and analyze this data. And GIS thrives on HPC. Advancements in data collection and spatial resolution have led to geographical datasets with billions of records. At this scale, GIS software without HPC struggles to access and visualize, much less analyze, the data. HPC gives GIS software the computational power needed to conduct complex and detailed terrain analysis, irrespective of the magnitude of the data.

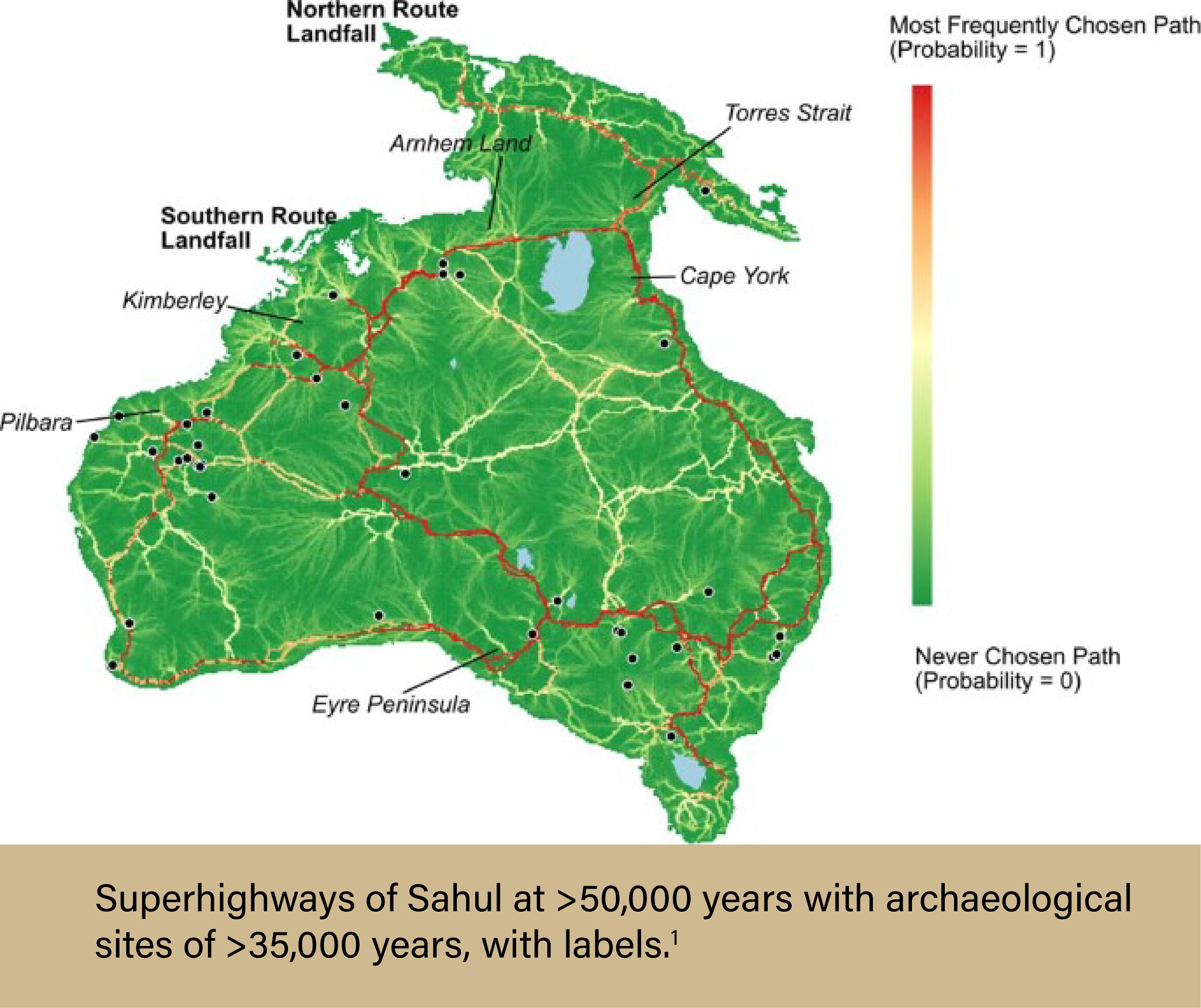

One recent example of archaeologists  using extensive computational modeling is the research completed by a team led by Dr. Stefani A. Crabtree, an Assistant Professor in Socio-Environmental Modeling in the Department of Environment and Society of the Quinney College of Natural Resources at Utah State University.1 The team wanted to investigate how humans populated Sahul—a paleocontinent consisting of modern-day Australia, Tasmania, and New Guinea—tens of thousands of years ago. To do this, the team needed lots of computing power. First, they needed to create the most comprehensive digital elevation model of Sahul ever constructed. This allowed the team to determine viewsheds, watersheds, and least-cost pathways, which were then included in one mega-model. From here, the researchers added information about known archaeological sites, which weighted the probability for each travel path created. Of the 125 billion potential travel paths across the continent, seven “super-highways” emerged. Archaeologists now know how people likely traveled across the continent and can use that information to look for new archaeological sites.

using extensive computational modeling is the research completed by a team led by Dr. Stefani A. Crabtree, an Assistant Professor in Socio-Environmental Modeling in the Department of Environment and Society of the Quinney College of Natural Resources at Utah State University.1 The team wanted to investigate how humans populated Sahul—a paleocontinent consisting of modern-day Australia, Tasmania, and New Guinea—tens of thousands of years ago. To do this, the team needed lots of computing power. First, they needed to create the most comprehensive digital elevation model of Sahul ever constructed. This allowed the team to determine viewsheds, watersheds, and least-cost pathways, which were then included in one mega-model. From here, the researchers added information about known archaeological sites, which weighted the probability for each travel path created. Of the 125 billion potential travel paths across the continent, seven “super-highways” emerged. Archaeologists now know how people likely traveled across the continent and can use that information to look for new archaeological sites.

Agent-based modeling (ABM) is even more in-depth and computationally expensive than terrain analysis. ABM is a type of computer simulation modeling that takes into account real-world factors, such as geography, social relationships, and individual differences. These models simulate interactions between people, places, objects, and time by representing individuals moving throughout their specific environment, behaving as normal humans would behave. ABM is an incredibly useful tool in many research fields, and archaeology is no exception. But because of the computational cost, utilizing HPC is essential for successfully running these simulations.

Agent-based models are built on the idea that every individual is unique, and each will act in their own way as they move throughout life. Allowing for this heterogeneity provides researchers with the most accurate simulations, but as each individual within the model needs to be its own data point, the simulations require a massive amount of computing power. Add into the equation regional differences in landscapes, travel paths, and climate—amongst other factors—and the ability for personal computers to run these simulations quickly disappears. Enter high-performance computing. HPC systems are explicitly designed for such use cases and can easily handle large and complex simulations. Many HPC systems have hundreds, if not thousands, of nodes (think: 1 node = 1 personal computer, roughly) that can all work in unison on a single task. For example, Purdue University’s Anvil supercomputer contains 1000 compute nodes, 32 large memory nodes, and 16 GPU nodes. The Anvil system is extraordinarily powerful and has been used for epidemiological ABM simulations that took into account the entire population of the United States. Although the data sets for these simulations were massive (~300 million “agents” with individual-level resolution), Anvil was able to provide efficient and reliable results to the researchers. Given that most archaeological ABM simulations would require the replication of far fewer people, a system like Anvil would be a boon to archaeologists in need of such modeling. But why exactly would archaeologists need ABM?

Since agent-based models simulate realistic populations, complete with a proper distribution of age, gender, job type or communal role, as well as geographical location and individual behavior, they are beneficial for archaeologists who want to obtain a better understanding of a historical landscape, including how information, goods, and populations traveled throughout a region. ABM allows researchers to experiment with populations and societies in silico, testing theories and comparing results with archaeological evidence to provide historical clarity. These simulations can give insight into bygone complex systems, and their potential for use in archaeology is endless. Current examples vary widely, ranging from the study of Ancient Roman trade networks to determining why France is a world-renowned wine region when wine grapes are not native to the area. But regardless of the research specifics, ABM studies need the advanced computing power that HPC provides.

3D Reconstruction and Digital Preservation

One problem that every archaeologist must contend with is the destructive forces of time. Whether it be affected by natural disasters, erosion, or human interference, time lays waste to objects and monuments of the past. Even modern heritage sites and archaeological artifacts protected by the most stringent of policies and efforts will not escape unscathed—it’s just a matter of time. But, there are ways that archaeologists can circumvent this problem. Using in-depth photogrammetry, archaeologists can reconstruct 3D models of historic landmarks based on 2D images, create models based on historical information and physical remains, and digitally preserve these structures for future generations.

3D reconstruction can be achieved via many different methods, usually determined by object size, location, and research funding. At the basic level, the process begins with data collection. And 3D reconstruction requires a lot of data. Spatial and object recording options include taking multiple photographs from all angles (photogrammetry), 3D laser scanning processes, LIDAR scanners, Total Station Theodolite, sonar sensors, and optical sensors. Oftentimes, multiple recording options are employed to ensure accurate data collection that includes relevant information like geospatial and landscape information, which provides more context for researchers.

After collecting the data, different softwares are used to develop and complete the 3D reconstruction models. No matter the chosen method, researchers need lots and lots of computing power. With HPC, these processes can be expedited, quickly giving researchers the needed results. Not only is this a benefit for archaeologists working at their home institutions, but it can potentially help those working in the field. For example, an archaeologist working on-site could use 3D reconstruction methods to virtually recreate a structure that is in shambles and partially buried underground. Instead of waiting until they return to their home institution to create the model for study, they upload their data from the field and use an HPC cluster to rapidly model the structure. Based on the information they collected from the site, the 3D model is created, providing them with a highly detailed rendering. Once the completed 3D model is obtained, the archaeologist may notice that pieces or areas typically associated with that type of structure are missing. This could indicate locations at the site that should be explored for further excavation. If the researcher could not produce the 3D model quickly, they may have missed the opportunity to excavate more while still in the field.

3D reconstruction can also advance the field of archaeology by allowing researchers without the ability to do field visits to study areas and objects from anywhere in the world. Digitally preserving artifacts and heritage sites not only enables the widespread study of archaeological evidence, but will allow people of the future to study and appreciate these things long after they have been destroyed. One notable program using HPC for 3D reconstruction and digital preservation purposes is the Visualising Heritage group from the School of Archaeological and Forensic Sciences at the University of Bradford. Their work involves imaging and visualizing various subjects of archaeological and anthropological importance, including bones, bodies, artifacts, archaeological sites, heritage structures, and both terrestrial and marine landscapes. An example project of theirs is the 3D reconstruction and exploration of the Fountains Abbey, a UNESCO World Heritage Site located in North Yorkshire, England. In 2021, the team was able to use above-ground and below-ground digital data to provide more detail of a burial site and a tannery—which they helped discover—on the west side of the church.2 Another of their projects, Digitised Diseases, involved creating an open-access compendium of digitized human bones so that researchers across the planet can study pathological type specimens from archaeological and historical medical collections. Thanks to 3D reconstruction, all this archeological evidence has been saved for posterity, even if the bones and stones get ground to dust.

Remote Sensing

Remote sensing refers to the collection of data from afar. It enables a researcher to obtain information on an area without having to travel to the location physically. For archaeologists, this typically involves capturing photographs or laser scans via aerial vehicles, such as planes or drones, or spaceborne surveillance systems, like satellites. This information can then be used to map landscapes, plan research projects and field expeditions, as well as detect any damage or change to an area or archaeological site. In short, remote sensing is supremely useful for archaeologists. There is one problem, however: Remote sensing technology accumulates a massive amount of data.

Typically, the more data available to a researcher, the better. But when a single satellite can obtain photographs covering millions of kilometers of land area, the issue of how to analyze the data so that it’s useful quickly presents itself. Until recently, this was a nearly unsolvable problem. Individuals would need to study each photograph or scan one-by-one, searching for unique markers that may indicate an archaeological site. The sheer amount of time and effort required made much of the data effectively useless. But recent advances in artificial intelligence (AI) are making it so that this process can be outsourced to HPC systems via object detection and deep learning algorithms.

Object detection is a computer vision technology whereby objects of interest, such as humans, animals, cars, and buildings, are located within images or videos. For example, using object detection software, a computer can pick out a burial mound from a LIDAR scan of a landscape. Deep learning is a method of AI that teaches computers to process data in a way that simulates human decision-making. It is based on neural networks that allow the computer to “learn” from large data sets. When combined with object detection, deep learning enables systems to learn how to better identify objects without human intervention. Continuing the previous example, deep learning could train the computer to differentiate between a burial mound and naturally occurring variations—such as hills—in the landscape. The computer’s newfound “ability” could then be used to quickly sift through an entire regions’-worth of remotely-sensed data to find every burial mound in the area, all without needing the direct involvement of a human researcher.

Understandably, this technological advancement is making waves in the field, helping usher in a new era of computational and digital archaeology. With object detection and deep learning algorithms, it is feasible to think that a single team of archaeologists could find dozens, if not hundreds, of previously unknown archaeological sites or features within a region. The laborious task of analyzing remotely-sensed data could be handled with a speed and efficiency (and potentially accuracy) simply unattainable by humans, leaving more time for archaeologists to interpret results and conduct fieldwork. But running AI-driven software is computationally costly. A personal laptop or computer isn’t powerful enough to quickly manage such large data sets or run complex AI programs. With high-performance computing, archaeologists can take full advantage of these emerging technologies. Instead of needing weeks or months to properly train the computer with deep learning algorithms, HPC systems can accomplish the task in days, or even hours.

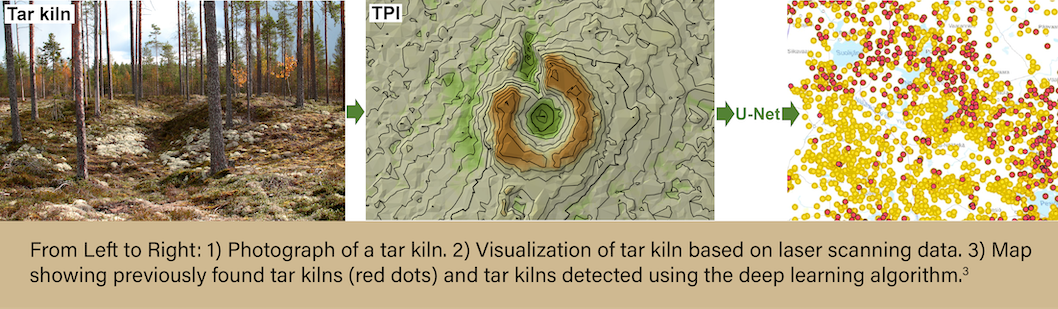

The amount of AI-based research is currently limited, but multiple studies have shown the effectiveness of these methods. One such study involved a Finnish team composed of individuals from multiple universities and organizations nationwide.3 The team used deep learning methods to analyze recently released Finnish high-density Airborne Laser Scanning (ALS) data of the boreal taiga forest zone. They aimed to detect archaeological traces of tar production kilns in the region—specifically in the Näljänkä, Kuivaniemi, and Hossa areas. The total land mass analyzed was roughly 7,068 km2. The team employed a semi-automated deep learning algorithm to detect the tar kilns from the ALS data. Overall, this method enabled the efficient location of many previously unknown archaeological features and proved to be faster and more accurate than manual methods.

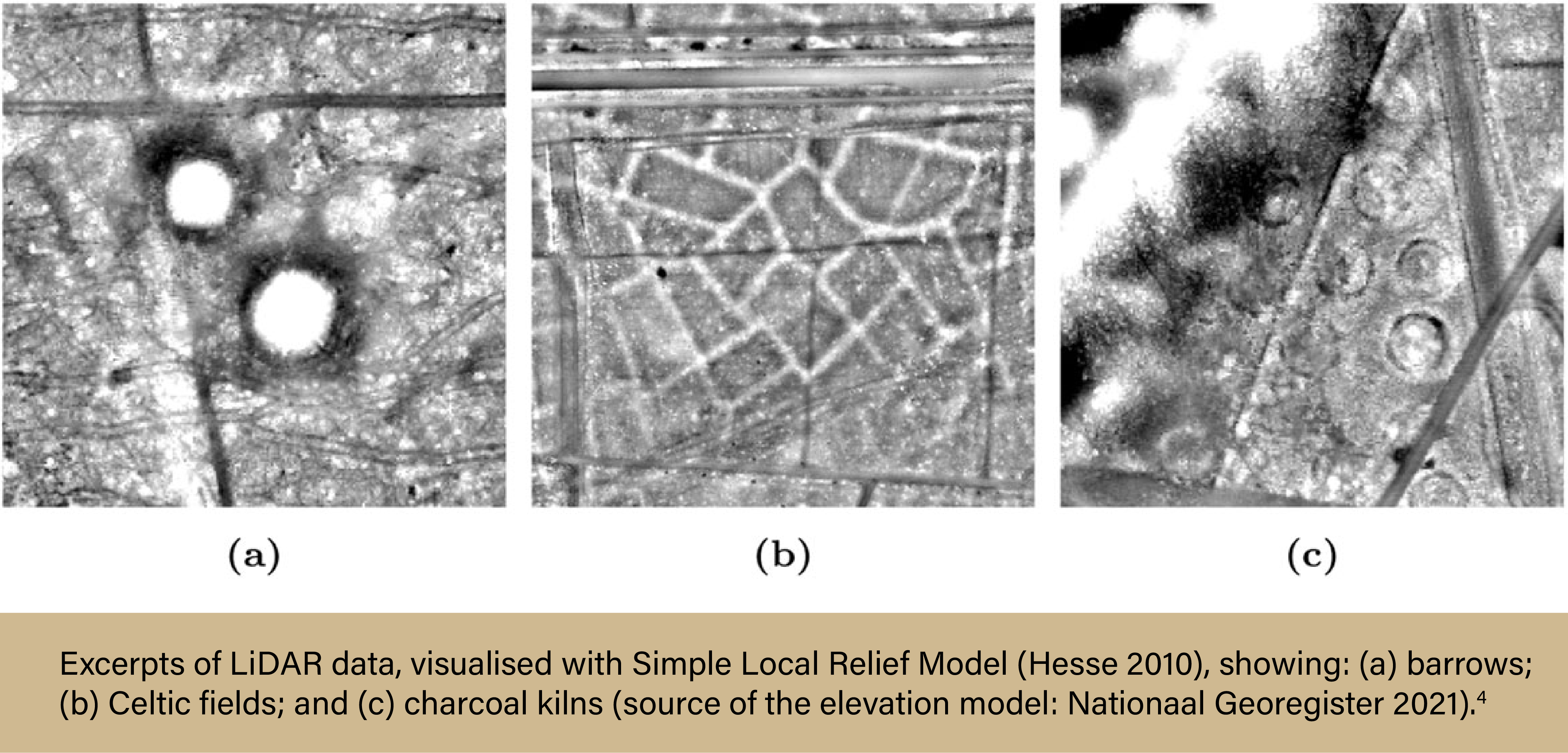

Another team, comprised of archaeologists from Leiden University in the Netherlands and computer scientists from the French graduate engineering school, ESIEA, set out to develop and implement a deep learning object detection approach for finding multiple types of archaeological objects in remotely-sensed data.4 The team used open-source LIDAR data from a region in the Netherlands known as the Veluwe to search for three types of objects: barrows, Celtic fields, and charcoal kilns. Roughly 4,500 images were included in the data sets, much more than any one human could (or would ever want to) analyze. In the end, the team found that their model was able to accurately detect and categorize all three types of archaeological objects in the remotely-sensed data.

The ability for AI to detect objects from remotely-sensed data will only continue to improve, and applications of remote sensing in archaeology are endless. Imagine automatically detecting damage to heritage sites from satellite images, or finding ancient aqueducts in a region where no known civilization existed using open-sourced LIDAR data, all within a matter of hours. The possibilities are certainly tantalizing for any archaeologist. All that’s needed to advance the field is for more and more researchers to utilize HPC resources in their own studies. Fortunately, the barrier to entry for HPC is lower than ever before, and while commercial systems are extremely costly, researchers can access many academic systems for free.

How to get ACCESS to HPC systems

Advanced Cyberinfrastructure Coordination Ecosystem: Services & Support (ACCESS) is an NSF-funded program that manages access to the national research cyberinfrastructure (CI) resources. Researchers from any domain wanting to use HPC resources can go through the ACCESS program to obtain allocations to different systems, allowing them free use of these resources from anywhere in the country. The example system given above—Purdue’s Anvil supercomputer—is available through the ACCESS program.

Anvil is Purdue University’s most powerful supercomputer, providing researchers from diverse backgrounds with advanced computing capabilities. Built through a $10 million system acquisition grant from the National Science Foundation (NSF), Anvil supports scientific discovery by providing HPC resources to thousands of researchers throughout the United States. Archaeologists wanting to use Anvil may request access via the ACCESS allocations process. More information about Anvil is available on Purdue’s Anvil website. Anyone with questions should contact anvil@purdue.edu. Anvil is funded under NSF award No. 2005632.

References:

- Crabtree, Stefani A., Devin A. White, Corey J. A. Bradshaw, Frédérik Saltré, Alan N. Williams, Robin J. Beaman, Michael I. Bird, and Sean Ulm. 2021. “Landscape Rules Predict Optimal Superhighways for the First Peopling of Sahul.” Nature Human Behaviour, April. https://doi.org/10.1038/s41562-021-01106-8.

- University of Bradford. “Fountains Abbey – Visualising Heritage,” http://visualisingheritage.org/fountains-abbey/.

- Anttiroiko, Niko, Floris Jan Groesz, Janne Ikäheimo, Aleksi Kelloniemi, Risto Nurmi, Stian Rostad, and Oula Seitsonen. 2023. “Detecting the Archaeological Traces of Tar Production Kilns in the Northern Boreal Forests Based on Airborne Laser Scanning and Deep Learning” Remote Sensing 15, no. 7: 1799. https://doi.org/10.3390/rs15071799

- Olivier, Martin, and Wouter Verschoof-van der Vaart. 2021. “Implementing State-of-the-art Deep Learning Approaches for Archaeological Object Detection in Remotely-sensed Data: The Results of Cross-domain Collaboration”. Journal of Computer Applications in Archaeology 4 (1): 274–289.DOI: https://doi.org/10.5334/jcaa.78

Written by: Jonathan Poole, poole43@purdue.edu